The Art of Coding Compositions

The artist Aaron Alden shares what it’s like to see life through “resonant pixels and waves.”

Written and photographed by Alex T. Williams

About 10 years ago, I purchased a wooded parcel in Esopus, New York. It wasn’t large, but compared to my cramped Brooklyn apartment it felt like a national park. It became my place to think, experiment, and mess around with tools.

Unbeknownst to me, just a mile down the road another New York City–based creative mind was doing something similar, camped out in an Airstream, hatching his next big idea over cold beers. It would take a pandemic and a mutual friend to bring us together. That friend had turned his Hudson Valley home into a kind of informal salon for curious, creative people, and Aaron Alden had taken over the garage as a laboratory for his own professional reinvention.

Alden began his career composing music for advertising, but the pandemic—and broader shifts in the media industry—forced a reset. What followed was less a career pivot, and more a recombination of skills he had been collecting for decades: programming, virtual-reality experimentation, generative software, sound design, and a fascination with translating real-world inputs—wind, motion, heart rate—into visual and sonic experiences.

Today Alden works as an immersive installation artist, designing complex interactive systems that transform data and human interaction into art. His projects—created for clients like Porsche owners’ clubs, Cascada Thermal Springs + Hotel, and Palo Alto Networks—blend software, hardware, light, and sound into environments that are part engineering experiment, part sensory spectacle.

In our conversation, Alden discusses creative evolution, composing with generative systems, and working in a territory where unruly data, strange machines, and human intuition intersect to produce unexpected outcomes.

You spent a lot of years composing music for film and advertising, correct?

Mostly advertising. I did a lot of car commercials—kind of specialized in car commercials. I did a lot of tech, a lot of other industries, but car commercials were the main focus.

Toward the end of the company’s trajectory we tried shifting to focus only on film trailers. In the film trailer world you write the music ahead of time and then try to get it placed. Unfortunately we did this right during COVID as the film industry was shutting down, so that was kind of the end of that.

How did you get on the path to building generative installations?

When you’re making music for film, there’s a lot of odd technology that you have to learn. It wasn’t necessarily intentional, but along those two decades of writing music I picked up a lot of side skills and learned a lot of odd technology.

I helped ad agencies expand their VR capabilities, and that led to me making strange art installations where nature was the driving force making the output of the sound in various ways.

So it was kind of just a long process of picking up odd tech skills and then refocusing them all with a clearer direction in the interactive world.

So it was maybe less of a hard pivot career-wise and more following the same curiosity and tools you had already learned, just applying them differently?

Everything that seemed unrelated suddenly became related in this new, odd industry of interactive generative art, where you have to take engineering skills, design skills, knowledge of music—just all these things rolled together in a sensible package.

Where did you feel most native in that world, and where were the biggest learning curves?

I feel like I had a strong intuition that we could be doing more with generative art to make work that was fascinating to people in a way where they saw themselves or data they created come out in the work.

I’ve always been doing projects that translate one form of input into another, whether it’s windmills in an Icelandic field generating tones as they pass a magnetic sensor, or people’s workout data changing how a piece of artwork looks on the wall.

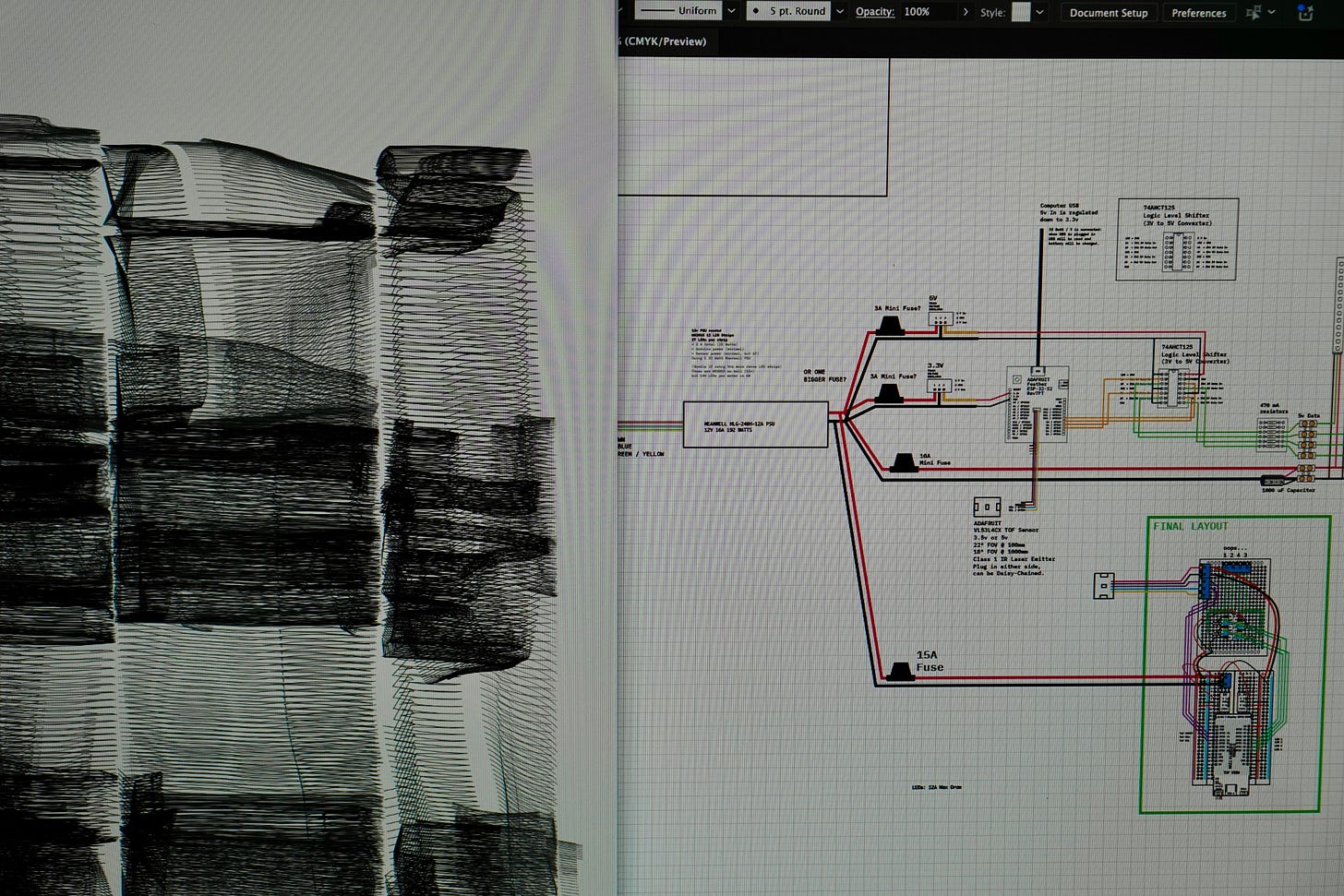

Earlier on, the tools weren’t quite there to do these projects as powerfully as they can now. The program I use—TouchDesigner—is like a melting pot for code, data, and media: Anything you throw into it, you can translate into some other form of output.

Of course, code has been able to do powerful things for a long time, and now everyone’s throwing their hat in the ring and doing crazy things with it. So I balance my time between node-based programming in TouchDesigner and code-based projects.

Across music, code, installations, and hardware, it seems like you’re designing systems rather than finished objects.

I never thought of it that way, but I guess that’s right. I’m not creating a JPEG necessarily, though sometimes I am. I think it’s almost accidental that I’m doing that, because I’m attracted to what the outcome is, but to get there requires these complicated systems. Sometimes the end result I have in mind requires learning new tools, and then learning and experimenting with those tools leads to surprising outcomes.

Do you feel like you’re still composing, just with different raw materials?

Absolutely. I’ve always played with analog synthesizers. It was only when I started experimenting with modular synths that I really understood what every part of the instrument was doing. That translated directly to design philosophies of modular thinking—building systems that rely on unique and odd parts that come together.

When I was doing sound design with cars, the sound of an engine would go up and down in pitch. They’re identifiable—I always thought of pitch as musical content. My tagline on Instagram is “resonant pixels and waves” because the physics of waves in sound and light have related properties. I see them as having points of overlap.

How often do you hit the limits of software tools and start bending or hacking them?

I feel like my answer isn’t the answer for this article subject because I feel like I’m the weak link. Tools are very powerful these days. In TouchDesigner, it’s like a blank page. There are nodes that help you do certain functions along the way, but it’s really your own recipe.

One of the problems I have with my data visualization projects is that I’m trying to make a product that doesn’t yet have a place to be shown. It would be great if I could get the data from my watch to sync to my smart TV, but even the smartest TVs are still pretty dumb. So I’m working on how to make those connections stronger and more seamless for the user. Sometimes you have to create your own solution.

You recently got a robot arm. What was that for?

The robot is an industrial robot. I originally wanted to get it to create a 3D printer. People have been doing interesting artistic and architectural design things with them for a long time. I have a very expensive, very small robot. To do what I really want, I need a much bigger one, but learning this helps me get started.

Right now we’re working with Porsche Studio Portland where we’re decorating macarons for an interactive exhibit. The user picks a Porsche color and the robot paints a macaron in that color. I’m having to hack this thing because the robot isn’t designed for a user to walk up and tell it what to do, so we’re building a small “dumb computer” with an ESP32 that simply tells the robot which program to run. [Editor’s note: An ESP32 is an inexpensive and powerful microcontroller.] We keep the dumb computer doing one task and the smart computer doing its own tasks, and they’re kind of unaware of each other.

What else is happening here in the garage lab right now?

Seventeen different projects all at once on top of each other. That makes it difficult.

At the moment, I’m working on the Porsche exhibition I mentioned. There will be four interactive exhibits: A giant kaleidoscope where a user can turn a steering wheel and transform live video into kaleidoscopic imagery; the robot decorating cookies; an interactive art car where users pick from palettes and patterns and decorate the car digitally, then get a print of their art car; and I’m taking the sound of Porsche engines and vibrating carbon-fiber plates to create cymatic sand patterns. We’ll film those sand patterns and project them through transparent fabric throughout the room, creating cascading immersive elements.

I’m also working on an interactive light installation for a hotel spa in Portland. We’ll be creating a saturated color experience that creates a journey through space for guests.

A lot of your work translates real-world data into visual or sonic outcomes. Why use those inputs instead of composing everything from scratch?

There’s a lot of art in the world that says, “I was inspired by space,” and then someone composes something based on their feelings about that inspiration.

But I think we can go farther. We can set up generative systems where we compose the rules that define how input responds and creates output.

The music I compose has a very small audience. (If you want to listen to my ambient sci-fi music, please do.) But I find there’s more interest in art when there’s more context behind it. When we have the power to create generative systems based on rules we establish, it’s fascinating to see how natural data or inputs alter the outcome.

How are you using AI? Do you see it as a collaborator, an instrument, or raw material?

I’m definitely using AI in all the ways everyone else is right now.

I love using it for visualization. Sometimes when I sit down to render a 3D model I know exactly what I want. Sometimes I don’t, so it’s an exploration. Throwing an idea into Midjourney and seeing the accidental outcomes can inspire new directions.

I also use AI to program—to create functional Arduino or ESP32 code, Python commands for TouchDesigner, and to troubleshoot tech problems that would have required much more expertise before.

It’s definitely a superpower, but I’m not using it in ways where AI is the dominant creator of the work. It’s a collaborator.

In this new chapter of your creative life, what excites you and what still feels uncertain?

I think it’s a great time to reinvent what the idea of being an artist means. The balance between art and commerce is interesting. I’ve been lucky to get projects funded by companies without putting too much branding on them.

I always say there’s the front door to MoMA and the back door to MoMA—I’m trying to get in through the back door by doing things a different way.

The tools right now are incredible. You can render anything, laser cut anything. If you can imagine it, you can do it. It’s a very exciting time to create.

What’s uncertain is how long it lasts. I’m optimistic, but it’s always a little scary. What I do now is going to make the phone ring the next time, and I won’t be in control of who’s calling. A creative career takes a lot of work to keep on the path you want to follow.

Right now I’m overloaded with client projects, and I’d love to get more time to focus on the work I’m doing for myself: translating data from people’s daily activities into art.

Is there anything you’re trying to figure out right now that you don’t understand yet?

Scaling up. Working larger. Getting funding for larger-scale projects.

I can build a light installation in my studio that fits on someone’s wall, but I want to build it at Dubai scale—like an airport wall. Everything right now is about scale. ⌂

This interview has been lightly edited for clarity.